Is AI really that thirsty?

By Xavier Evans & Louisa Mesnard.

We have written before about the energy demand impact of AI infrastructure (see here and here). A less discussed concern in AI infrastructure development is the use of freshwater for cooling. Common refrains include the saying that every ChatGPT query uses a bottle of water while Sam Altman insists that an average query to the model uses one-fifteenth of a teaspoon of water.

So which is it? This edition is inspired by the excellent article by Molly Taft in WIRED, and establishes the context for our next edition that will examine the new technologies coming to the fore in data centre cooling.

Today, the standard for data centre cooling works by lowering the temperature of the ambient air in the buildings where chips are housed. Primary air cooling is evaporative: absorbing heat by using thermal energy to evaporate water in large cooling towers.

Other options include (and will be covered in more detail in the following edition):

Direct-to-chip cooling, where liquid flows through cold plates mounted directly on CPUs or GPUs.

Cooling at the silicon layer, where microfluidic cooling is integrated into the chip package itself.

Immersion cooling, where servers or blades are submerged in a dielectric liquid bath.

Using advanced materials and internal structures that significantly improve heat transfer inside data-centre cold plates and heat exchangers.

At present, most cooling systems rely on fresh water drawn from local sources. Saline and brackish water are far more abundant, but corrode cooling equipment. As a result, non-potable fresh water is commonly used for cooling. In the US, roughly 95% of data centre water usage is drawn from local municipal sources, despite fresh water accounting for only about 3% of Earth’s total water. To reduce demand on fresh water supplies, some major data centre operators are increasingly using recycled wastewater for cooling.

It is important to note that the analysis of water usage must make a distinction between water usage and water consumption. Depending on any given process, water can be used but then later returned to its original source, often with some treatment to reduce environmental impact. Water consumed tracks the total water that is used and not returned to its original source, which has wider implications for local environments and communities all the way to sea levels and the rotational axis of the planet.

One final caveat is that this edition examines the use of water in data centres themselves, and not indirect water use and consumption as part of semiconductor manufacturing or energy generation to power the data centres. The indirect water footprint is often included in some analyses, but can be effectively mitigated by new production methods (which we will cover in a a future edition) and broader transitions to new energy generation methods, which we covered here and here.

AI as a thirsty beast

In 2023, a seminal paper was released by Shaolei Ren that reverse-engineered the training cost of GPT-3 on Microsoft infrastructure, estimating the total water consumed to be approximately 700,000 litres. The water is evaporated rather than recycled, with approximately 80% of water used in conventional water evaporation methods being consumed and not discharged back into the water system.

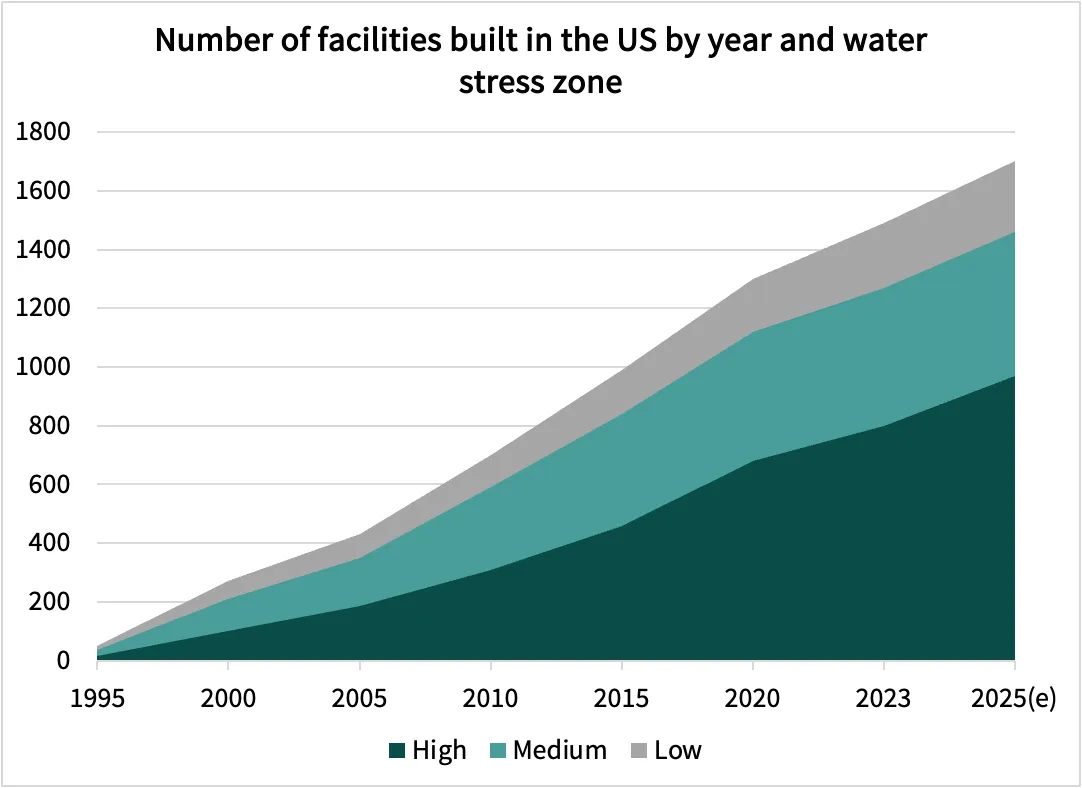

The impact of water consumption is particularly important in drier climates and water-stressed areas. In the US, approximately two in every three data centres built are in water stressed areas. Other major countries that are welcoming data centres such as Saudi Arabia and the United Arab Emirates are also generally water scarce. The correlation between data centre development and water scarcity is no coincidence. Data centres are an opportunity to extract commodified value from abundant solar energy. Given electrons are difficult to export, industries where the product is commodified but energy intensive become a proxy for exporting solar energy. Hence, an explosion of data centre growth in solar rich areas, which are also generally more arid. Considering that a major impact of climate change will be to increase overall levels of water stress, this presents as a major concern.

Water scarcity and the overall environmental impact of data centre development sparked major public protests in the Netherlands, where concerns centred on the environmental and infrastructural impacts of large-scale data centres. In Uruguay, opposition intensified amid a severe drought, as residents criticised the prioritisation of water resources for a Google data centre over local communities’ access to drinking water. Similar unrest emerged in Chile, where activists and local authorities challenged plans for a data centre they argued would worsen water scarcity and environmental degradation.

But is this really the case?

In a major pushback against the common water crisis narrative surrounding AI, Andy Masley published a breakdown of the overall water consumption of AI that suggested “the AI water issue is fake”. He argues the water usage concern is overblown for three reasons:

People are uneasy about water being used for digital products they do not value, and often ignore how common this is across many industries.

AI’s water use seems large when viewed in isolation, but small when spread across the hundreds of millions of daily users.

Large, context-free figures about data centre water use are compared to personal activities rather than to other industries, which distorts perception.

While the article is very US-centric, it offers some interesting nuances for thinking about data centre development and operations globally. Only 0.04% of American daily freshwater use is dedicated to cooling data centres. As a comparison, Masley points to the fact that 3% of daily freshwater is consumed by golf courses, 75x the water intensity of data centres. Considering that AI is approximately 15-20% of data centre utilisation in the US, the real delta between golf and AI is approximately 375x. The point is not to denigrate golf or dismiss real concerns about the use of water, but it is to try and maintain an objective view of the relative impact of AI, which is relatively less compared to energy procurement.

Beyond reverse-engineering

Less facetiously, the fact that AI is the focus of water stress concerns over industries that are far more water-intensive speaks to a widening perception gap around AI infrastructure amongst the public. Efforts to obscure water usage until court mandated does not help the image that AI companies have something to hide. The more transparent this data can become, the more informed users and tool developers can be in making sustainability-related decisions.

Mistral’s full LCA analysis has proven to set the standard so far, while Google’s disclosures are currently limited to analysis at the point of inference (they estimate that the average text based prompt consumes 0.26mL of water, roughly similar to Sam Altman’s original claim). More disclosures and transparency could help make the conversation more grounded in facts and actual comparison rather than speculation and reverse-engineered estimates.

Some countries require key disclosures on material metrics around environmental impact. However, unlike energy availability, water access is not currently a material driver of commercial decision-making in data centre deployment strategy. The industry generally focuses on Power Usage Efficiency (PUE) as a key driver of value, where Water Usage Effectiveness (WUE) is often not tracked. Several countries require minimum PUE requirements for the construction of new data centres, but only China requires WUE as well. If other countries adopt the same requirements, particularly in the face of public pressure, hyperscalers may not have a choice but to disclose water usage statistics.

These metrics also exist in context, a higher WUE is less critical for a data centre in a water-rich environment compared to a desert, just as a higher PUE powered entirely by a renewable grid is less of a concern than higher PUE powered by off-grid gas turbines. The lack of comparability has led to new metrics being proposed, such as the WUE+, taking into account embodied water, regional water context, and incentives for water reuse, while remaining relatively easy to compute and apply in practice.

The debate surrounding AI and water usage often suffers from a lack of clear data, leaving us to guess at the true environmental cost. Without standardised WUE reporting (never mind a more nuanced metric like WUE+), we’re stuck reverse-engineering estimates and comparing bottles to swimming pools. As we look toward a future of higher compute density, the focus must shift to resource efficiency and innovative cooling technologies that mitigate environmental impact.

It is also true that any reduction in overall water usage is clearly good for the environment, all else being equal. Innovation in cooling mechanisms opens opportunities for a reduction of water consumption and a more sustainable approach to AI infrastructure development. Our next edition dives into the specific engineering breakthroughs, such as microfluidics and liquid immersion, that are bridging this gap.

The next wave of data centre infrastructure will be defined by resource efficiency. It is time to stop measuring the cost of progress in drops.