SustAIned Future: Speed, incentives & SLMs with Reframe Venture

By Xavier Evans & Louisa Mesnard.

Welcome to the Elaia Sustainable AI newsletter. Every two weeks, Elaia’s Sustainability team will dive into open questions at the nexus of two of the biggest trends of our generation: the rapid development and application of AI and our responsibility to improve societal and planetary health.

A week after we kicked off the first edition of this newsletter, Elaia’s sustainability team was on the ground at FRAME — Reframe Venture’s annual event bringing together GPs and LPs to pursue responsible investing in venture capital and unpack the future of this asset class.

One of the major questions was how to reconcile the astonishing growth in AI adoption (and associated investment) with the knowledge that the underlying infrastructure is energy and water intensive.

How should investors navigate this? Is it even possible to invest in sustainable AI?

We sat down with Johannes Lenhard (CEO) and Oliver Nixon (Research Lead) from Reframe Venture to reflect on these complex questions and offer their take. What followed was an argument for mindset shifts and an appreciation of how the development of the sector could organically link sustainability with effectiveness.

Speed above all else

The current playing field of AI development is defined by the pursuit of everything, all at once, and as quickly as possible. We asked the Reframe team whether there is any capacity for sustainability to enter as a priority in the current, winner-takes-all mentality.

“We always have choices, but at the moment we have been unwilling to make choices that develop this technology sustainably,” Johannes argues. Speed is driving everything, and all other considerations, even cost, are being deprioritised as a result.

Energy is a great example. Renewables are cheaper than fossil fuels in many places, but they take longer to bring online, so hooking up fast and dirty fossil fuels to bring compute online, now, is the priority.

That’s not to say it’s impossible. “Time and again, we hear that you can build AI sustainably; you can train the models sustainably. It’s simply slightly harder and takes more work,” says Johannes.

We asked him if there is ever a competitive business model in more sustainable AI, and if so, who would drive that. “The continental European in me wants to believe that Europe can become a leader in Sustainable AI, but not necessarily through the obvious vehicle of regulation”, he says.

Oliver has been leading a piece of work looking at the business case for responsible AI. He argues that there are real material factors which are driving competitive advantage for a more responsible approach for AI model developers and platform providers alike.

He sees embedding trust at the heart of AI systems as a fundamental part of the process, particularly in processes such as procurement. Trust encompasses many relevant areas for responsible investing (safety, misinformation, bias, privacy, validity) in addition to transparency and accountability, which is where energy and water usage come in. “Corporates are already expecting adherence to guidelines such as the SustainableIT standards; startups will simply have to consider these factors if they want to sell their product.”

Changing the incentives

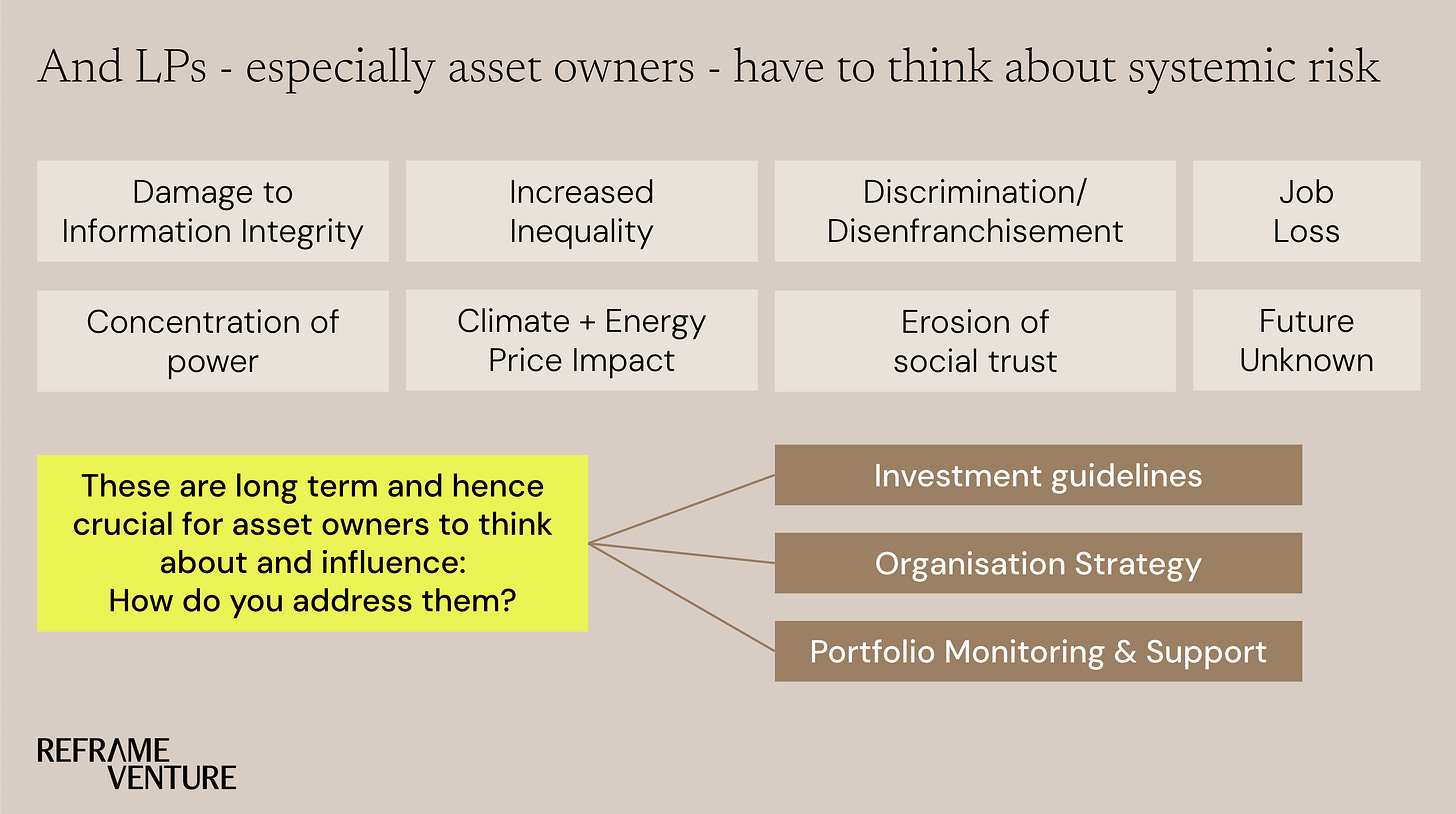

So it’s an incentives game? “Yes — and that’s where we can see change, particularly in Europe,” Johannes reiterates. At an LP level, large asset owners have significant sway in the allocation of capital as a leading signal in the market.

In other sectors, LPs such as Allianz are investing over $20bn in climate and clean tech solutions, building out a fund-of-funds strategy that only invests in GPs with a serious climate focus.

The strategy is inherently long-term. Rather than only chasing high returns, the weight of capital allocation accelerates technologies that will be required to address forecasted risks in decades from now.

So why not the same for AI? Many LPs are also highly connected to the potential negative impacts of reckless AI development. “People in communities facing shrinking water reserves or higher power prices can also have pension or insurance plans with major LPs. So those same LPs have multiple, long-term incentives that are tied directly to local communities,” says Johannes.

The same applies to the potential workforce implications of the development of AI, which we covered in our last edition. Were there to be a significant rise in AI-driven job displacement, many asset owners would be directly impacted through their members, policyholders, and, in some cases, taxpayers.

SLMs vs LLMs, not just a question of sustainability

And while LLMs dominate the popular conception of AI, researchers from NVIDIA argue that the rise of agentic AI systems is ushering in a mass of applications in which small language models (SLMs) perform a limited set of specialised tasks repetitively and with little variation.

These models trade breadth of capability for efficiency of inference. Even when general conversational abilities will be required, agentic systems will simply interface and invoke different models depending on need, the researchers argue.

Johannes sees a natural confluence of sustainability and the next stage of AI development, which will see a shift from ‘what AI can achieve’ to ‘which model can achieve it best’. The competitive race could move in a different direction, driving model developers and implementers in the direction of efficient mechanisms, rendering current extrapolations of energy demand slightly overzealous.

“Particularly in healthcare, an industry attuned to human-impact variables alongside economic return, the opportunities offered by SLMs are evident,” says Johannes. Repetitive, singularly focused tasks trained on specific datasets and potentially hosted on edge computing are a natural fit for SLMs. Proving a use case in one industry is often the catalyst for expansion, spurring a potential trend towards high-efficiency models, with benefits for environmental sustainability as well as trust.

Reasons for optimism

We finished our discussion with a return to the original question: is it even possible to invest in sustainable AI? Johannes and Oliver argue it is, but not inevitable.

“The current paradigm of speed and scale driven by hyperscalers and the largest possible LLMs is not conducive to sustainable deployment of compute, but nor is it the necessary end-state of the AI revolution,” says Oliver.

“The conversations we are having with LPs suggest that in providing the capital for GPs to invest in the next generation of AI champions, they have clear incentives in favour of the responsible development of the technology,” says Johannes. “As it matures, a more complex and interconnected ecosystem of more efficient systems is likely, as a race of speed becomes a more traditional competition of cost and quality.”

Reframe Venture is currently hosting a series of workshops across its network deep diving on the opportunities in responsible AI. The team expects to develop new scenarios that help investors think more effectively about long-term capital allocation for AI. Johannes reiterates that “we need better narratives — ones that embrace nuance and make the case for a more sustainable approach to AI development.”

Couldn't agree more, it's such a critical point how we balance AI's incredible potential with our planetary responsibilities, especially with the current speed-first approach. How do we truely shift the investor mindset away from 'winner takes all' and embed sustainability incentives into the core architecture of venture capital for AI? Such a thought-provoking and important piece!